The Empire in your pocket

Big Tech is mining your life. Here are 13 ways to make it harder for them

Hi dear one,

Something changed in me this week.

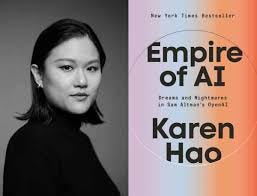

I’ve been diving deep into the work of investigative journalist Karen Hao, and I haven’t been able to look at my screen the same way since. I figured out why in the shower this morning, which is where great ideas and thoughts comes for me sometimes.

I had been treating AI like an inevitable tide. Something massive and unstoppable that I just had to learn to swim in. But tides are moved by the moon.

This tide is being moved by algorithms and boardrooms full of white middle-aged men. And we don’t have to just let it sweep us away.

Karen spent years tracing the AI supply chain across five continents, from water activists in Chile to data labellers in Kenya. She is the one who gave us the term AI Colonialism to describe how tech giants extract resources from the many to enrich the few.

We talk about AI like it’s an ethereal, magical cloud. Karen’s new book, Empire of AI, pulls that curtain back hard.

AI isn’t just math. It’s a physical power play that looks a lot more like the 19th century than we’d like to admit.

The infrastructure trap nobody talks about

Think about the old empires building railroads. Those were 100-year assets. Even after an empire left a country, the tracks and bridges stayed. Permanent structures that local people could eventually own and use.

But AI is built on chips that expire in about three years.

That one fact stopped me cold.

Countries in the Global South can never truly own their tech future because the hardware dies so fast. They are stuck in a cycle of renting the newest chips from Silicon Valley just to keep their systems running. A built-in, permanent subscription to someone else’s empire.

The ghost workers

We are told AI is automated. Karen says it is actually human-powered.

Behind every clean AI response are millions of what she calls ghost workers in places like Kenya, the Philippines, and Venezuela. Paid pennies to label data and, more painfully, to filter out the dark and toxic corners of the internet so we never see them.

Every time we use AI, someone absorbed something ugly on our behalf.

In the old days, empires colonized land for gold, spices, and rubber. Today, they colonize our digital lives for data. Big Tech treats our photos, our words, and our creative output as a free natural resource, extracted without consent. They mine the internet, refine it into a model, and sell it back to us.

Let that sink in for a second. It certainly did for me.

So what do we actually do?

Karen is hopeful. Genuinely. And after thinking about all of this, so am I.

She talks about Sovereign AI and looking for what she calls the bicycles of this moment. Small, efficient, community-led tools rather than the massive steamrollers of Big Tech.

A few places worth your attention and support with links here:

Mozilla Common Voice is building an open-source library of human voices that no corporation owns. You can donate your voice by reading sentences aloud. Five minutes of your time quietly pushes back against Big Tech’s monopoly on how AI hears us.

DAIR (Distributed AI Research Institute) is doing community-rooted research on the real labor and harm stories behind AI. Their podcast, Mystery AI Hype Theatre 3000, is exactly what it sounds like and absolutely worth your time.

The Electronic Frontier Foundation (EFF) has been defending digital privacy long before AI was a household word. Their Right to Repair campaigns are directly tied to breaking that three-year hardware dependency cycle.

Algorithm Watch monitors how automated systems affect human rights. Less doom-scrolling, more actual journalism.

The Public AI Network (PAINT) is pushing for AI to be treated as public infrastructure, like roads or water. If Sovereign AI interests you, this is where that conversation is happening at government level.

The Open Knowledge Foundation (OKFN) builds tools so individuals and small groups can manage their own data without defaulting to Big Tech. Their Open Data Editor requires zero coding knowledge.

The Part Where We Take Our Power Back

You have more power in this than you think. I have put together thirteen practical moves you can start today. No tech skills needed. No big lifestyle overhaul. Just real moves that actually matter.

Here are the first five, on me.

1. Practice Data Refusal Whenever an app asks to use your data to improve their products or train their AI, click No. Your data is a resource. Do not give it away for free.

2. Try Open Weight Models Explore tools like HuggingChat or Mistral’s own interface. These are built on open models that no single company completely owns. Same ease of use, significantly less extraction.

3. Support Right to Repair The longer a chip stays in use, the less power the hardware monopoly holds. Advocate for tech that lasts.

4. Pay Attention to Who Made It Before you adopt a new AI tool, spend two minutes asking: Is this company transparent about its labor practices? Do they acknowledge the ghost workers? Conscious use is still use, but it is not the same as unconscious consumption.

5. Practice Chat Hygiene Every month or two, clear your conversation histories, turn off memory toggles, and clear cached AI outputs. What the system does not permanently store cannot be repackaged and sold back to you later.

Steps 6 through 13 go deeper. They are the ones that protect your creative work, your original thinking, and the digital soil you are standing on.

If you have found this useful and want to go further with me, a paid subscription unlocks the rest of the checklist, plus future deep dives, exclusive tools, and a community of people doing exactly this together.

I built this because nothing like it existed when I needed it. If it's helping you, upgrading is the kindest way to say so and it unlocks the rest of the checklist, future deep dives, exclusive tools, and a community of people doing exactly this together.

YES, I WANT THE FULL CHECKLIST